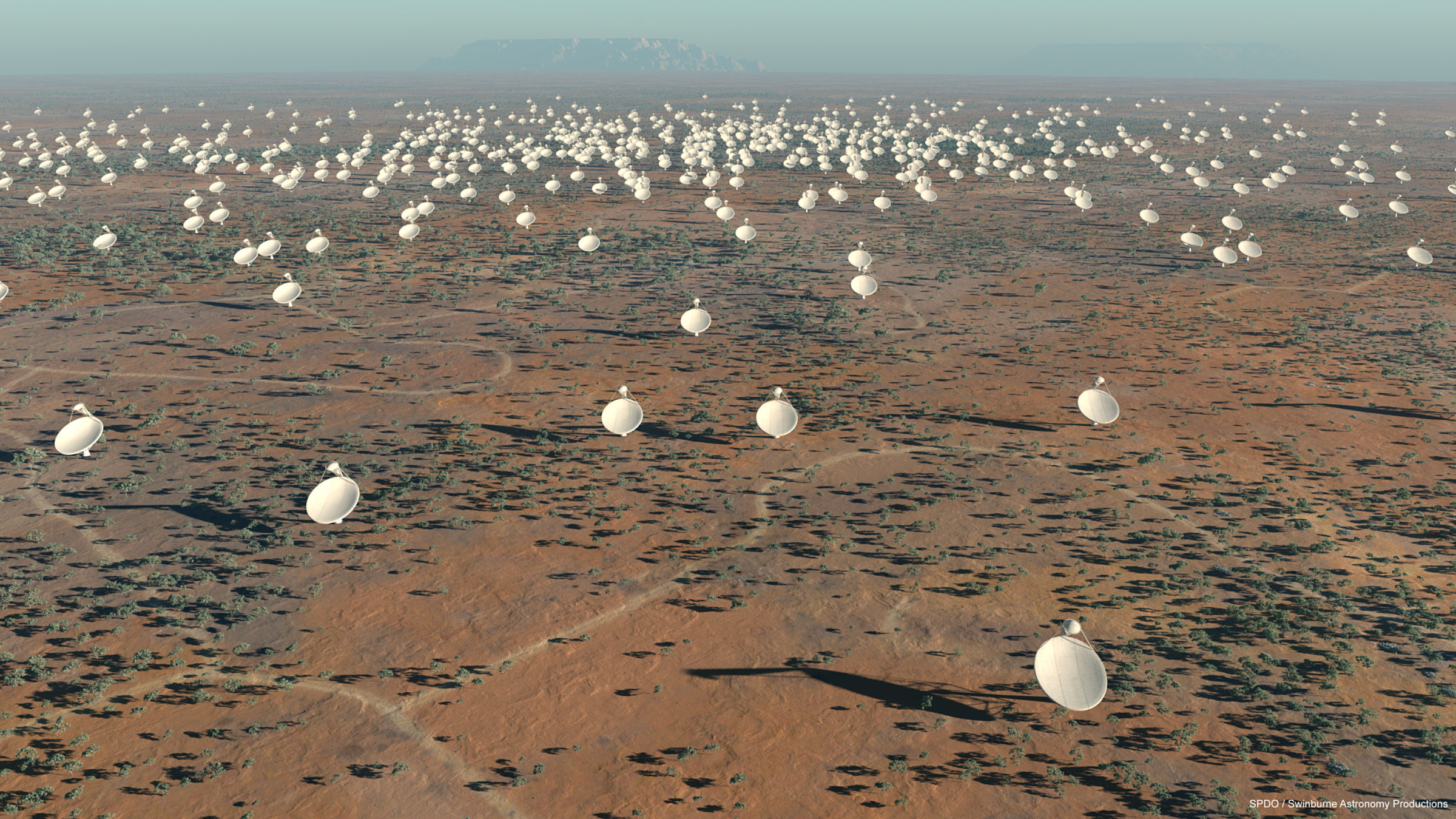

… All the processing pipelines including that analysis will need to be run on supercomputers.”Įrgo, he argued, functions like calibration and image reconstruction will need to be performed before the data is stored. “But the issue is that when bandwidth and data size, more processing needs to be moved into the pipeline because it cannot be easily performed by individual researchers. “This model works quite well when the bandwidth is low and the observations are not too big – you are talking about gigabytes to a few terabytes range,” Varetto continued. “Calibrations might happen in different places and at different stages,” Varetto said, “but final calibration steps are normally required to, for example, calibrate the position of a source with respect to another source.” For the last seven years, Varetto explained, the general radio astronomy pipeline has been to receive, amplify, digitize and combine the signals, then move them into a hierarchical storage management system equipped with tape libraries, sans post-processing and calibration. “Now,” Varetto said, “let’s finally talk about pipelines, which is the actual subject of the presentation.” In the middle of the MWA, there’s a datacenter inside of a faraday cage, which aggregates all the signals from all the spider-like antennae that cover the MWA using a mix of FPGAs and GPUs (“one of the biggest FPGA deployments in the world,” Varetto said) before the aggregated signals are sent to Pawsey in Perth. In the absence of the SKA, Pawsey works with the Murchison Widefield Array (MWA), also located north of Perth. “But 80 percent of the storage is currently filled with radio astronomy data.” “Although the focus has been on radio astronomy, the center today allocates about 80 percent of the computational resources to other domains,” Varetto said. This is because the data from radio telescopes – and particularly the anticipated data load from the SKA – pose a serious burden on centers. This equipment is powering, in part, precursor projects to prepare for Pawsey’s work on the SKA in the coming years: data ingestion, data visualization, data lifecycle management, data sharing – in short, data work. “With the recently received funding, we acquired a brand new HPE Cray supercomputer named after a small marsupial endemic to areas close to Perth” – the computer is named Setonix – “ in a separate procurement, we acquired 60 petabytes of object storage which will be soon be integrated with the HPE supercomputer in the future.” “We recently received 70 million dollars in funding to invest in replacing our computing, network and storage infrastructure,” Varetto said. In fact, Pawsey itself was “launched in 2014 with the goal of playing a critical role in the Square Kilometre Array project,” explained Ugo Varetto, Pawsey’s CTO, in a presentation at the virtual ISC 2021 conference held last week. One such team hails from the Pawsey Supercomputing Centre in Perth, Western Australia, located a few hundred kilometers south of one of the SKA sites. It won’t be finished until late in the 2020s, but researchers around the world are already preparing for what they anticipate will be an unprecedented deluge of radio astronomy data. Costing billions of dollars, construction for the SKA was finally approved just six days ago. As the name implies, the instruments of the massive radio telescope will span well over one square kilometer, using hundreds of dishes and hundreds of thousands of low-frequency aperture array telescopes spread across remote lands in Australia and South Africa that have as little human radio interference as possible. Since 1987 - Covering the Fastest Computers in the World and the People Who Run ThemĪs the largest-ever radio telescope, the Square Kilometre Array (SKA) will be a behemoth.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed